- Where did you get the camera module(s)?

- Model number of the product(s)?

IMX519

- What hardware/platform were you working on?

NVIDIA Jetson Nano B01.

- Instructions you have followed. (link/manual/etc.)

ArduCAM/Jetson_IMX519_Focus_Example (github.com)

werate-01@werate-01:~/Arducam-IMX519$ v4l2-ctl --list-formats-ext

ioctl: VIDIOC_ENUM_FMT

Index : 0

Type : Video Capture

Pixel Format: 'RG10'

Name : 10-bit Bayer RGRG/GBGB

Size: Discrete 4656x3496

Interval: Discrete 0.100s (10.000 fps)

Size: Discrete 3840x2160

Interval: Discrete 0.048s (21.000 fps)

Size: Discrete 1920x1080

Interval: Discrete 0.017s (60.000 fps)

Size: Discrete 1280x720

Interval: Discrete 0.008s (120.000 fps)

- Problems you were having?

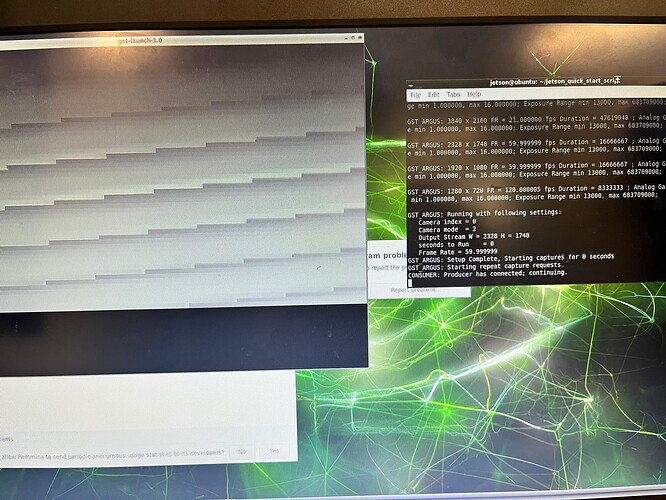

I can’t use 2328x1748 sensor which exists if I plug the IMX519 to Raspberry Pi 4B. This sensor does not exist in NVIDIA Jetson Nano B01.

- Troubleshooting attempts you’ve made?

I use 4656x3496 sensor because it has the same aspect ratio which is 1,3:1 . However it is slow, it only have 10 fps instead of 30 fps.

- What help do you need?

I need help on how to use the 2328x1748 sensor or any sensor with aspect ratio 1,3:1 (other available sensor have aspect ratio 1,7:1) that have higher fps in NVIDIA Jetson Nano B01.