-

Where did you get the camera module(s)?

Uctronics -

Model number of the product(s)?

UC-512 rev C

2x UC-599 rev B -

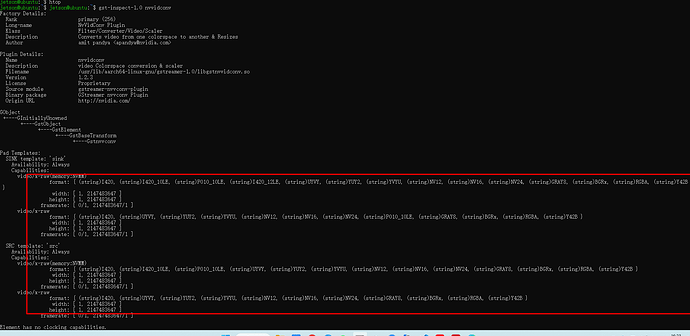

What hardware/platform were you working on?

Jetson nano 4Gb, L4t 32.7.2 -

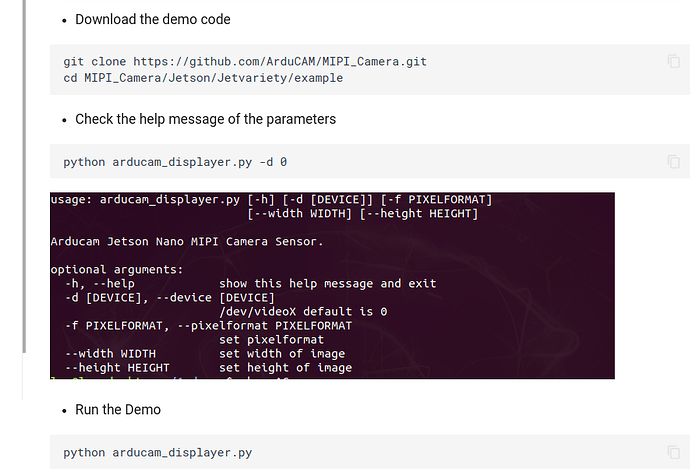

Instructions you have followed. (link/manual/etc.)

Install_full.sh -m arducam -

Problems you were having?

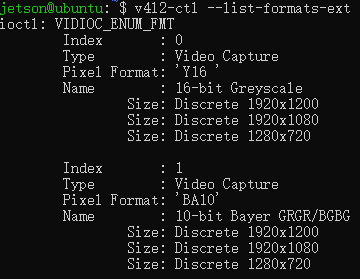

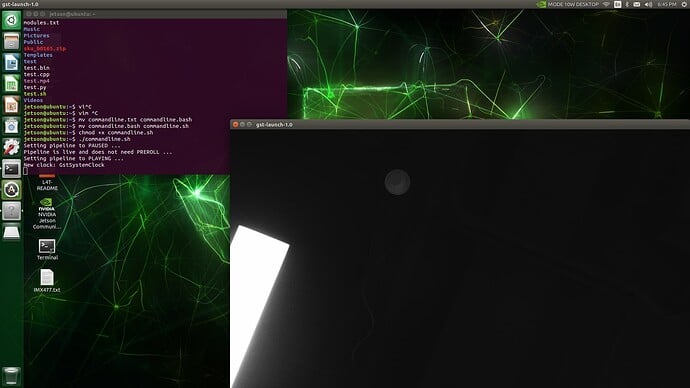

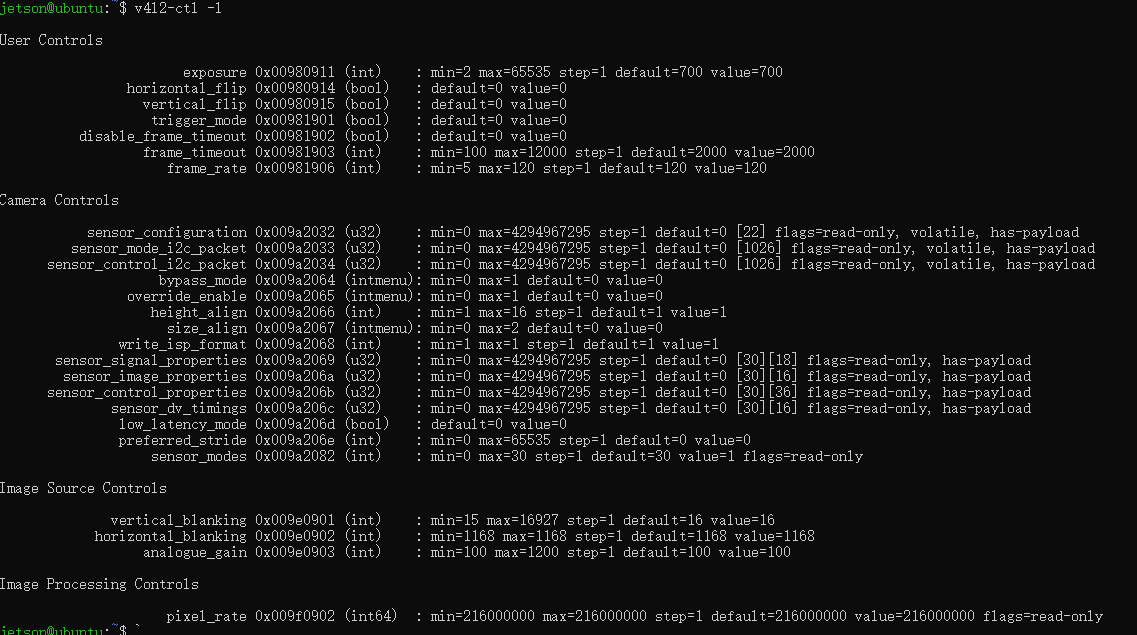

installation of driver seemed to go all right. I can get /dev/video0 as well. When running v4l2-ctl --list-formats-ext I get coherent output as well.

But, when running nvarguscamerasrc, I get:

Error generated. /dvs/git/dirty/git-master_linux/multimedia/nvgstreamer/gst-nvarguscamera/gstnvarguscamerasrc.cpp, execute:740 No cameras available

- The dmesg log from your hardware?

dmesg | grep -E “imx477|imx219|arducam”

[ 0.212373] DTS File Name: /var/jenkins_home/workspace/n_nano_kernel_l4t-32.7.1-arducam/kernel/kernel-4.9/arch/arm64/boot/dts/…/…/…/…/…/…/hardware/nvidia/platform/t210/porg/kernel-dts/tegra210-p3448-0000-p3449-0000-b00.dts

[ 0.420637] DTS File Name: /var/jenkins_home/workspace/n_nano_kernel_l4t-32.7.1-arducam/kernel/kernel-4.9/arch/arm64/boot/dts/…/…/…/…/…/…/hardware/nvidia/platform/t210/porg/kernel-dts/tegra210-p3448-0000-p3449-0000-b00.dts

[ 1.314532] arducam-csi2 7-000c: firmware version: 0x0003

[ 1.314888] arducam-csi2 7-000c: Sensor ID: 0x0000

[ 1.377781] arducam-csi2 7-000c: sensor arducam-csi2 7-000c registered

[ 1.402163] arducam-csi2: arducam_read: Reading register 0x103 failed

[ 1.402169] arducam-csi2 8-000c: probe failed

[ 1.402205] arducam-csi2 8-000c: Failed to setup board.

[ 1.550697] vi 54080000.vi: subdev arducam-csi2 7-000c bound

-

Troubleshooting attempts you’ve made?

Not much, I was just able to perform the suggested troubleshooting guides on your manuals, but non of them would give insight on this particular one -

What help do you need?

Please, help me debug this further, do you know where I could keep pulling this string? Thanks!